[A] computer is a stupid machine with the ability to do incredibly smart

things, while computer programmers are smart people with the ability to

do incredibly stupid things. They are, in short, a perfect match.

-Bill

Bryson

A computer cuts your work in half and gives you back the bloody stumps.

-Unattributed

A computer is only as good as the people who are employed to replace the

people who were made redundant by the computer.

-Unattributed

A computer lets you make more mistakes faster than any invention in

human history with the possible exceptions of handguns and tequila.

-Mitch

Ratcliffe

A crash reduces

Your expensive computer

To a simple stone.

-(If

Error Messages Were Haiku, www.pcpoetry.com)

A distributed system is one in which the failure of a computer you

didn't even know existed can render your own computer unusable.

-Leslie

Lamport

A lot of what appears to be progress is just so much technological

rococo.

-Bill Gray

A successful technology creates problems that only it can solve.

-Alan

Kay

All programmers are playwrights and all computers are lousy actors.

-Unattributed

All scientifically possible technology and social change predicted in

science fiction will come to pass, but none of it will work properly.

-Neil

Gaiman

All technology should be assumed guilty until proven innocent.

-David

Ross Brower

An idiot with a computer is a faster, better idiot.

-Rich Julius

Any idiot can use a computer. Many do.

-Unattributed

Any problem in computer science can be solved with another layer of

indirection. But that usually will create another problem.

-David

Wheeler

Any research done on how to efficiently use computers has been long lost

in the mad rush to upgrade systems to do things that aren't needed by

people who don't understand what they are really supposed to do with

them.

-Graham Reed

Any sufficiently advanced technology is indistinguishable from magic.

-Arthur

C. Clarke

Any sufficiently advanced technology is indistinguishable from a rigged

demo.

-James Klass

Artificial intelligence is the study of how to make real computers act

like the ones in movies.

-Unattributed

As far as we know, our computer has never had an undetected error.

-Unattributed

As practiced by computer science, the study of programming is an unholy

mixture of mathematics, literary criticism, and folklore.

-B.A.

Sheil

Asking if computers can think is like asking if submarines can swim.

-Unattributed

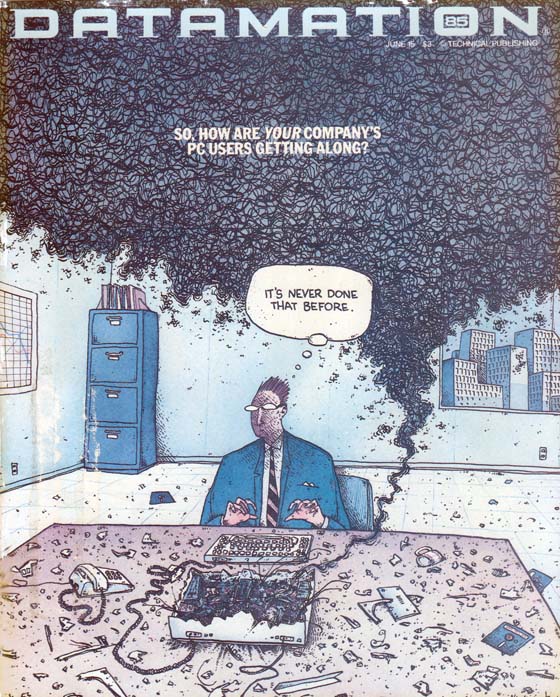

At the source of every error which is blamed on the computer you will

find at least two human errors, including the error of blaming it on the

computer.

-Unattributed

Bad command or file name. Good typing, though.

(Computer error

message)-Unattributed

Bad things come in threes. However, when dealing with computers, the

fourth thing is always the start of the next group of three.

-Unattributed

Cheese in an aerosol can is the greatest advance in technology since

fire.

-James Angove

Computer Science is no more about computers than astronomy is about

telescopes.

-E.W. Djikstra

Computer Science: A study akin to numerology and astrology, but lacking

the precision of the former and the success of the latter.

-Stan

Kelly-Bootle

Computers are like Old Testament gods; lots of rules and no mercy.

-Joseph

Campbell

Computers are man's attempt at designing a cat: it does whatever it

wants, whenever it wants, and rarely ever at the right time.

-Unattributed

Computers are such time-saving devices. In fact, I've just spent the

last three years trying to print out an envelope.

-Elayne Boosler

Computers can do better than ever what needn't be done at all. Making

sense is still a human monopoly.

-Marshall McLuhan

Computers can now keep a man's every transgression recorded in a

permanent memory bank, duplicating with complex programming and

intricate wiring a feat his wife handles quite well without fuss or

fanfare.

-Lane Olinghouse

Computers can still barely open a printer port, much less the pod bay

doors.

-Lee Gomes

Computers make it easier to do a lot of things, but most of the things

they make it easier to do don't need to be done.

-Andy Rooney

Don't anthropomorphize computers. They hate it when you do that.

-Unattributed

Don't explain computers to laymen. Simpler to explain sex to virgins.

-Robert

A. Heinlein

Engineers are always honest in matters of technology and human

relationships. That's why it's a good idea to keep engineers away from

customers, romantic interests, and other people who can't handle the

truth.

-Unattributed (From Engineers Explained)

Enter any eleven-digit prime number to continue.

-Unattributed (Computer command

prompt)

Even though today's technology provides us with mountains of data, it is

useless without judgment.

-Felix G. Rohatyn

Every time you turn on your new car, you're turning on 20

microprocessors. Every time you use an ATM, you're using a computer.

Every time I use a set top box or game machine, I'm using a computer.

The only computer you don't know how to work is your Microsoft computer,

right?

-Scott McNealy

For a list of all the ways technology has failed to improve the quality

of life, please press three.

-Alice Kahn

For a successful technology, reality must take precedence over public

relations, for Nature cannot be fooled.

-Richard P. Feynman,

in

his analysis of the Space Shuttle Challenger explosion. Academy Award

winner William Hurt portrays Dr. Feynman in "The

Challnger Disaster," a drama based on the late Nobel

Prize-winning theoretical physicist's final book, "What

Do You Care What Other People Think?" If you missed last

night's premiere, it will be rebroadcast again tonight at 9 pm on The

Science Channel.

Having a computer is like having a small, silicon version of Gary Busey

on your desk. You never know what's going to happen.

-Bill Maher

Humanity is acquiring all the right technology for all the wrong reasons.

-Buckminster

Fuller

I have a computer, a vibrator and pizza delivery. Why should I leave the

house?

-Unattributed

I have always wished that my computer would be as easy to use as my

telephone. My wish has come true. I no longer know how to use my

telephone.

-Bjarne Stroustrup

I may be just an empty flesh terminal relying on technology for all my

ideas, memories and relationships, but I am confident that all of that,

everything that makes me a unique human being, is still out there,

somewhere, safe in the theoretical storage space owned by giant

multi-national corporations.

-Stephen Colbert

I think computer viruses should count as life. I think it says something

about human nature that the only form of life we have created so far is

purely destructive. We've created life in our own image.

-Stephen

Hawking

I think everyone in this country should learn to program a computer.

Everyone should learn a computer language because it teaches you how to

think. I think of computer science as a liberal art.

-Steve Jobs

I think it is time we learned the lesson of our century: that the

progress of the human spirit must keep pace with technological and

scientific progress, or that spirit will die. It is incumbent on our

educators to remember this; and music is at the top of the spiritual

must list.

-Leonard Bernstein

I was shocked upon viewing Internet porn while surfing the Web last

night. Then I realized my wife must have wired the mouse on our computer.

-John

Alejandro King (The Covert Comic)

If moral behavior were simply following rules, we could program a

computer to be moral.

-Samuel P. Ginder

If the Catholic church couldn't stop Galileo, then governments won't be

able to stop things now.

-Carlo

de Benedetti (re: regulation of information technology.)

If we had a reliable way to label our toys good and bad, it would be

easy to regulate technology wisely. But we can rarely see far enough

ahead to know which road leads to damnation. Whoever concerns himself

with big technology, either to push it forward or to stop it, is

gambling in human lives.

-Freeman Dyson

If you can't beat your computer at chess, try kickboxing.

-Unattributed

If you don't know how to do something, you don't know how to do it with

a computer.

-Unattributed

If you put tomfoolery into a computer, nothing comes out but tomfoolery.

But this tomfoolery, having passed through a very expensive machine, is

somehow ennobled, and no one dares to criticize it.

-Pierre Gallois

Imagine if every Thursday your shoes exploded if you tied them the usual

way. This happens to us all the time with computers, and nobody thinks

of complaining.

-Jeff Raskin

In a way, staring into a computer screen is like staring into an

eclipse. It's brilliant and you don't realize the damage until it's too

late.

-Bruce Sterling

In all technologically 'advanced' countries, fashion has replaced

tradition, so that involuntary membership in a society can no longer

provide a feeling of community.

-W.H. Auden

In computer science, we stand on each other's feet.

-Brian K. Reid

In the computer business, there are three kinds of lies: lies, damned

lies, and benchmarks.

-Unattributed

In the long run, everything is a toaster.

-Bruce

Greenwald (on innovative technologies)

In the old days, writers used to sit in front of a typewriter and stare

out of the window. Nowadays, because of the marvels of convergent

technology, the thing you type on and the window you stare out of are

now the same thing.

-Douglas Adams

It is only when science asks why, instead of simply describing how, that

it becomes more than technology. When it asks why, it discovers

Relativity. When it only shows how, it invents the atomic bomb, and then

puts its hands over its eyes and says, "My God, what have I done?"

-Ursula

K. LeGuin

It's a truism in technological development that no silver lining comes

without its cloud.

-Bruce Sterling

Let's be frank, the Italians' technological contribution to humankind

stopped with the pizza oven.

-Bill Bryson

Levitt's First Law of Information Technology: If it's free, adopt it.

-Unattributed

Man is the best computer we can put aboard a spacecraft... and the only

one that can be mass produced with unskilled labor.

-Wernher von

Braun

Memory is like an orgasm. It's a lot better if you don't have to fake

it.

-Seymour Cray (re: computer virtual memory)

Misuse of reason might yet return the world to pre-technological night;

plenty of religious zealots hunger for just such a result, and are happy

to use the latest technology to effect it.

-A.C. Grayling

Most undergraduate degrees in computer science these days are basically

Java vocational training.

-Alan Kay

My perception was/is that while the rest of the computer world was

striving for Fault Tolerant Software, Microsoft was working on Fault

Tolerant Users.

-John Robinson

Never let a computer know you're in a hurry.

-Unattributed

Never trust a computer you can't throw out a window.

-Steve Wozniak

Once a new technology rolls over you, if you're not part of the

steamroller, you're part of the road.

-Stewart Brand

Our entire much-praised technological progress, and civilization

generally, could be compared to an axe in the hand of a pathological

criminal.

-Albert Einstein

Part of the inhumanity of the computer is that, once it is competently

programmed and working smoothly, it is completely honest.

-Isaac

Asimov

PCMCIA stands for either Personal Computer Memory Card International

Association or People Can't Memorize Computer Industry Acronyms.

-Unattributed

Read, read, read and put away computers. Forget the Internet, that's all

crap.

-Ray Bradbury

Reading computer manuals without the hardware is as frustrating as

reading sex manuals without the software. In both cases the cure is

simple though usually very expensive.

-Arthur C. Clarke

Science is everything we understand well enough to explain to a

computer. Art is everything else.

-Donald Knuth

Science is to computer science as hydrodynamics is to plumbing.

-Stan

Kelly-Bootle

Some technologies do their job perfectly and tend to stick around. The

spoon is one example, the lawn-roller another. Paper may well be a third.

-Unattributed

(From The Economist)

Technological man can't believe in anything that can't be measured,

taped, or put into a computer.

-Clare Boothe Luce

Technological progress has merely provided us with more efficient means

for going backwards.

-Aldous Huxley

Technology [is] the knack of so arranging the world that we need not

experience it.

-Max Frisch

Technology frightens me to death. It's designed by engineers to impress

other engineers, and they always come with instruction booklets that are

written by engineers for other engineers- which is why almost no

technology ever works.

-John Cleese

Technology is anything that wasn't around when you were born.

-Alan

Kay

Technology is dominated by two types of people: those who understand

what they do not manage, and those who manage what they do not

understand.

-Unattributed

Technology is not in itself opposed to spirituality and to religion. But

it presents a great temptation.

-Thomas Merton

Technology is really civilization, let's face it.

-Arthur C. Clarke

Technology is so much fun but we can drown in our technology. The fog of

information can drive out knowledge.

-Daniel J. Boorstin

Technology makes it possible for people to gain control over everything,

except over technology.

-John Tudor

Technology today is the campfire around which we tell our stories.

There's this attraction to light and to this kind of power, which is

both warm and destructive.

-Laurie Anderson

That's the thing about people who think they hate computers. What they

really hate is lousy programmers.

-Jerry Pournelle

The British don't make computers because they never figured out how to

make them leak oil.

-Unattributed

The Buddha resides as comfortably in the circuits of a digital computer

or the gears of a cycle transmission as he does at the top of a mountain.

-Robert

Pirsig

The computer industry has frequently borrowed from mythology: Witness

the sprites in computer graphics, the demons in artificial intelligence,

and the trolls in the marketing department.

-Jeff Meyer

The computer industry is a chicken on growth hormones, sloshing around

in a nutrient bath with its head cut off.

-Peter Sugarman

The computer is a moron.

-Peter Drucker

The computer revolution hasn't started yet. Don't be misled by the

enormous flow of money into bad defacto standards for unsophisticated

buyers using poor adaptations of incomplete ideas.

-Alan Kay

The computer saves man a lot of guesswork, but so does the bikini.

-Evan

Esar

The difference between e-mail and regular mail is that computers handle

e-mail, and computers never decide to come to work one day and shoot all

the other computers.

-Jamais Cascio

The entire body of computer science can be viewed as nothing more than

the development of efficient methods for the storage, transportation,

encoding, and rendering of pornography.

-Unattributed

The fault lies not with our technologies but with our systems.

-Roger

Levian

The first time a person gets a screwdriver, he's going to go around the

house tightening all the screws, whether they need it or not. There's no

reason a computer will not be similarly abused.

-Theodore K. Robb

The goal of Computer Science is to build something that will last at

least until we've finished building it.

-Unattributed

The human race has today the means for annihilating itself-either in a

fit of complete lunacy, i.e., in a big war, by a brief fit of

destruction, or by careless handling of atomic technology, through a

slow process of poisoning and of deterioration in its genetic structure.

-Max

Born

The Internet was done so well that most people think of it as a natural

resource like the Pacific Ocean, rather than something that was

man-made. When was the last time a technology with a scale like that was

so error-free? The Web, in comparison, is a joke. The Web was done by

amateurs.

-Alan Kay

The most likely way for the world to be destroyed, most experts agree,

is by accident. That's where we come in; we're computer professionals.

We cause accidents.

-Nathaniel Borenstein

The newest computer can merely compound, at speed, the oldest problem in

the relations between human beings, and in the end the communicator will

be confronted with the old problem, of what to say and how to say it.

-Edward

R. Murrow

The only thing God didn't do to Job was give him a computer.

-I.F.

Stone

The only truly portable computer language is profanity.

-Unattributed

The power to hurt... has evolved in a direct relationship to

technological advancement.

-Roger Zelazny

The protean nature of the computer is such that it can act like a

machine or like a language to be shaped and exploited.

-Alan Kay

The real problem of humanity is the following: we have paleolithic

emotions; medieval institutions; and god-like technology. And it is

terrifically dangerous, and it is now approaching a point of crisis

overall.

-E.O. Wilson

The Republic of Technology where we will be living is a feedback world.

-Daniel

J. Boorstin

The Web brings people together because no matter what kind of a twisted

sexual mutant you happen to be, you've got millions of pals out there.

Type in "Find people that have sex with goats that are on fire" and the

computer will ask, "Specify type of goat."

-Richard Jeni

The world is just filling up with more and more idiots! And the computer

is giving them access to the world! They're spreading their stupidity!

At least they were contained before- now they're on the loose everywhere!

-Harlan

Ellison

There are more computers running Windows than VMS. There are also more

cockroaches than humans.

-Kevin G. Barkes

There are two kinds of computer users: those who have lost data and

those who will lose data.

-Unattributed

There is a computer disease that anybody who works with computers knows

about. It's a very serious disease and it interferes completely with the

work. The trouble with computers is that you play with them.

-Richard

P. Feynman

There is an evil tendency underlying all our technology- the tendency to

do what is reasonable even when it isn't any good.

-Robert Pirsig

There is no data to support that computers make business more

productive... most companies have merely found faster and cheaper ways

to do dumb things.

-Gary Loveman

There is no escaping from ourselves. The human dilemma is as it has

always been, and we solve nothing fundamental by cloaking ourselves in

technological glory.

-Neil Postman

This computer makes me all frowny with pure nougat-filled hatred!

-Jhonen

Vasquez

Unlike human beings, computers possess the truly profound stupidity of

the inanimate.

-Bruce Sterling

We are reaching the stage where the problems we must solve are going to

become insoluble without computers. I do not fear computers. I fear the

lack of them.

-Isaac Asimov

We are stuck with technology when what we really want is just stuff that

works.

-Douglas Adams

We build our computer [systems] the way we build our cities: over time,

without a plan, on top of ruins.

-Ellen Ullman

We've arranged a civilization in which most crucial elements profoundly

depend on science and technology. We have also arranged things so that

almost no one understands science and technology. This is a prescription

for disaster. We might get away with it for a while, but sooner or later

this combustible mixture of ignorance and power is going to blow up in

our faces.

-Carl Sagan

While modern technology has given people powerful new communication

tools, it apparently can do nothing to alter the fact that many people

have nothing useful to say.

-Lee Gomes

Whom computers would destroy, they must first drive mad.

-Unattributed

Why is it drug addicts and computer aficionados are both called users?

-Clifford

Stoll

Without software, a computer is just a lump of plastic- whereas with

software, it's a lump of plastic that can permanently destroy critical

data.

-Dave Barry

Writing is a slow and a difficult process mentally. How you physically

render the words onto a screen or a page doesn't help you. I'll give you

this example. When words had to be carved into stone, with a chisel, you

got the Ten Commandments. When the quill pen had been invented and you

had to chase a goose around the yard and sharpen the pen and boil some

ink and so on, you got Shakespeare. When the fountain pen came along,

you got Henry James. When the typewriter came along, you got Jack

Kerouac. And now that we have the computer, we have Facebook. Are you

seeing a trend here?

-P.J. O'Rourke

Yesterday it worked

Today it is not working

Windows is like that

-(If

Error Messages Were Haiku, www.pcpoetry.com)

-----

NFPE- NON-FATAL PROCESSING ERROR:

ITC - IGNORING THE CONTRACTOR

Remember

that potential race condition I warned you about in the coding

implementation meetings? You know, the one you condescendingly dismissed

in front of your in-house staff of snickering, cognitively challenged

ex-baristas? The condition that could never happen 'in the real world'

and therefore could be ignored?

Guess what, Skippy? Some

other process on the system- perhaps one from that odd location called

'reality'- just changed the offset into the next available customer

acccount number table.

Fortunately for you, I ignored your

explicit refusal to authorize the time necessary to write the code to

lock and release the table offset. I did it on my own time out of a

sense of professional pride and responsibility. If I hadn't, this

application- and, through the resulting series of cascading failures,

your entire production system- would have reduced this server to a

puddle of molten silicon.

The arcane segmentation fault it would have thrown would have corrupted

the entire account number sequencing mechanism. Your crack team of

outsourced, clueless code monkeys would have taken weeks to identify the

cause, let alone correct it. And who are we kidding? You would have been

on the phone to me in under an hour, pleading- no, demanding- that I

supply a patch, immediately and at no charge, because it's in a part of

the code that I wrote and, therefore, is my fault, despite the fact it

behaved exactly in the inane manner you decreed.

I would

have then directed you here: http://tinyurl.com/nak5n7c

It's

a capture of that portion of the aforementioned video conference meeting

where I warned you about this problem and spent ten minutes describing

situations in which the condition could occur- and your response,

accompanied by the smirks and giggles of your obsequious minions.

This

message will appear in the production run log file only this one time

and will probably not be seen by anyone, since you only check log files

when something crashes and burns. You never check for non-fatal

processing errors that should be corrected but aren't because that would

be contrary to your policy of ignoring the smoke emanating from your hat

until your hair ignites.

Anyway, in the unlikely event

someone does read this, you should also check the report date function

two modules down. As written, the end of month summary publication will

at some point display a cover date of February 30. I pointed this out in

our last meeting and would have corrected it, but it required access to

another function in another module which I couldn't access. You said

you'd have Bjorn fix it. Let me tell you about Bjorn. His real name is

Walter. He changed it to Bjorn because he thought it would improve his

chances of being hired. Walter is only vaguely aware of his surroundings

and, if you look right now, is wearing mis-matched socks.

You're

welcome.

And I'm still waiting for that last check.

Categories:

Computers,

Quotes on a topic,

Technology

Home

KGB Stuff

Commentwear

E-Mail KGB

Donate via PayPal

Older entries, Archives and Categories

Top of page